100 Top Tips: Microsoft Excel

Power up your Microsoft Excel skills with this powerful pocket-sized book of tips that will save you time and help you learn more from your spreadsheets.

28 July 2014

A Google Glass device in use. More photos here.

Here are the key takeaways I noted:It's early days for Google Glass, and there was a lot of excitement in the room just seeing the devices and trying them out. There's clearly a lot of potential for innovative new applications, particularly for platform-based services, which will be better able to build a business model on a device that is not conducive to advertising, and that does not yet support monetisation. What are your thoughts on Google Glass?

Permanent link for this post | Blog Home | Website Home | Email feedback

© Sean McManus. All rights reserved.

Visit www.sean.co.uk for free chapters from Sean's coding books (including Mission Python, Scratch Programming in Easy Steps and Coder Academy) and more!

Power up your Microsoft Excel skills with this powerful pocket-sized book of tips that will save you time and help you learn more from your spreadsheets.

This book, now fully updated for Scratch 3, will take you from the basics of the Scratch language into the depths of its more advanced features. A great way to start programming.

Code a space adventure game in this Python programming book published by No Starch Press.

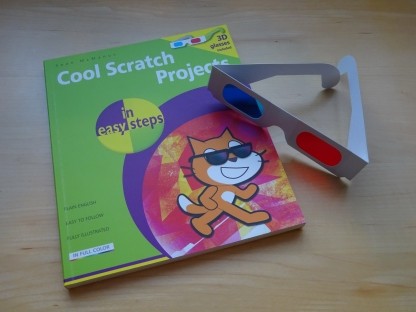

Discover how to make 3D games, create mazes, build a drum machine, make a game with cartoon animals and more!

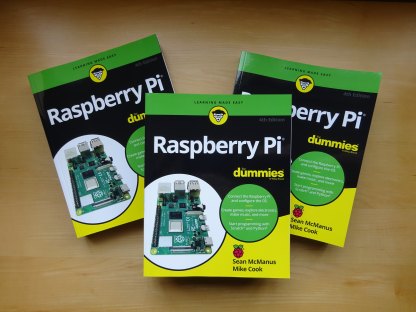

Set up your Raspberry Pi, then learn how to use the Linux command line, Scratch, Python, Sonic Pi, Minecraft and electronics projects with it.

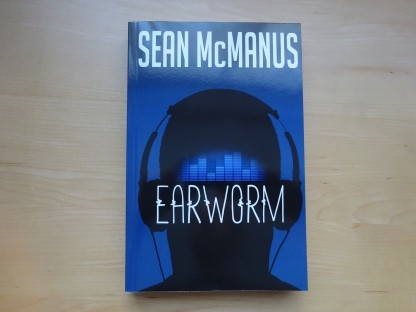

In this entertaining techno-thriller, Sean McManus takes a slice through the music industry: from the boardroom to the stage; from the studio to the record fair.